Picture a student teacher the morning of their first formal observation. They’ve prepared for days. The formal observations feels high stakes because in a sense it is: observations are rare. Not because anyone has failed them, but because the math of field placement supervision makes frequent feedback hard to deliver.

A university supervisor managing a full roster of student teachers across a dozen school sites, balancing their own teaching load, can often realistically visit each candidate two to three times a semester.

Between those visits, student teachers do get support, but it comes with real limits:

- Mentor teachers are present and often helpful, but their feedback reflects a teacher working within one building’s context, not necessarily the frameworks a preparation program is building toward

- Cohort meetings and seminar discussions give candidates space to process, but the feedback is secondhand. No one else was in the room.

What if that gap didn’t have to define the experience? How could student teachers receive specific, framework-aligned feedback after every session? Could supervisors arrive at observations with a semester’s worth of data, rather than reconstructing a narrative from two visits?

Fortunately, there’s a way to augment the traditional model to make this all possible.

The problem with episodic observation

When a student teacher knows they’ll be formally observed once or twice this semester, that session carries weight. They prepare differently, perform. receive feedback that reflects a curated version of their practice, not necessarily the daily reality of their work.

Practically speaking, issues that surface in week three of a placement may not get identified until a supervisor visits weeks later. By then, the teacher candidate may calcify certain patterns that are causing persistent issues in classroom management, instructional planning, or elsewhere. All the while, a small course correction could have prevented the larger issue from emerging. The model also asks supervisors to draw broad conclusions from a narrow slice of evidence: two observations, however carefully conducted, are snapshots, not a portrait.

While issues with infrequent feedback are logically clear, the benefits of frequent feedback are research-backed.

- John Hattie’s Visible Learning synthesis puts feedback at an effect size of 0.70, among the highest-impact interventions identified across decades of educational research.

- Wilcoxen and Lemke reached a similar finding: ongoing, process-focused feedback raises performance in ways that summative evaluation simply cannot.

- Schaefer and Clandinin found that regular observations with constructive feedback were highly valued by beginning teachers

- Garcia and Weiss reported that the highest-performing education systems in the world provide feedback as part of the regular experience of teaching.

A key theme in this body of research is regular and ongoing. Feedback works when it’s frequent, specific, and connected to real practice.

For student teachers, the implication is direct: a placement evaluated through two observations is, by design, a low-feedback environment, which is less conducive to growth.

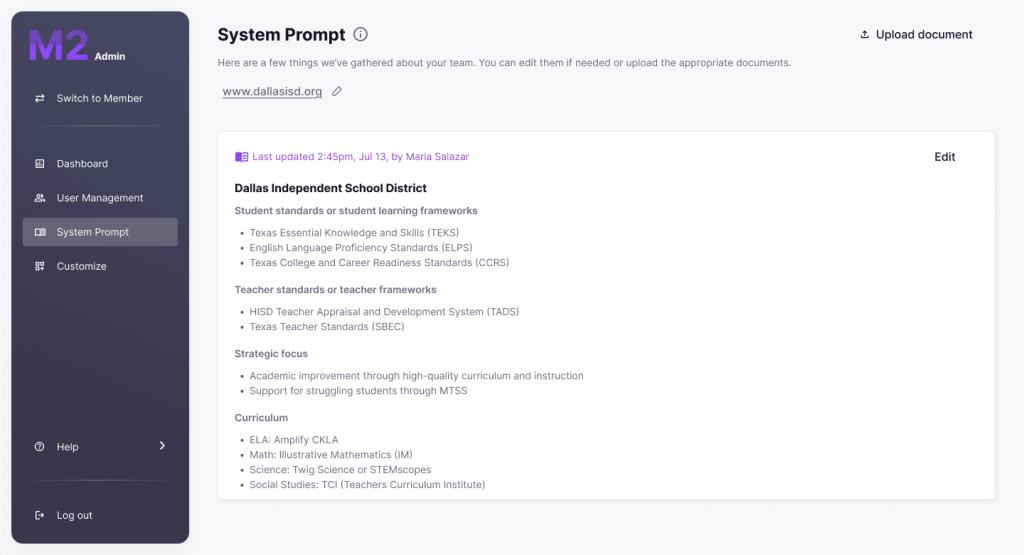

What three to four months of M2 looks like instead

M2 gives student teachers something the traditional model doesn’t: a feedback loop that runs alongside their practice rather than interrupting it once or twice a semester.

When student teachers use M2 weekly, they receive AI-powered feedback after every session on:

- Questioning techniques

- Participation patterns

- Instructional pacing

- Classroom dynamics

They start identifying their own tendencies before a supervisor ever needs to name them. Early in a placement, when there’s the most room for improvement, that kind of immediate, low-stakes reflection has an outsized impact.

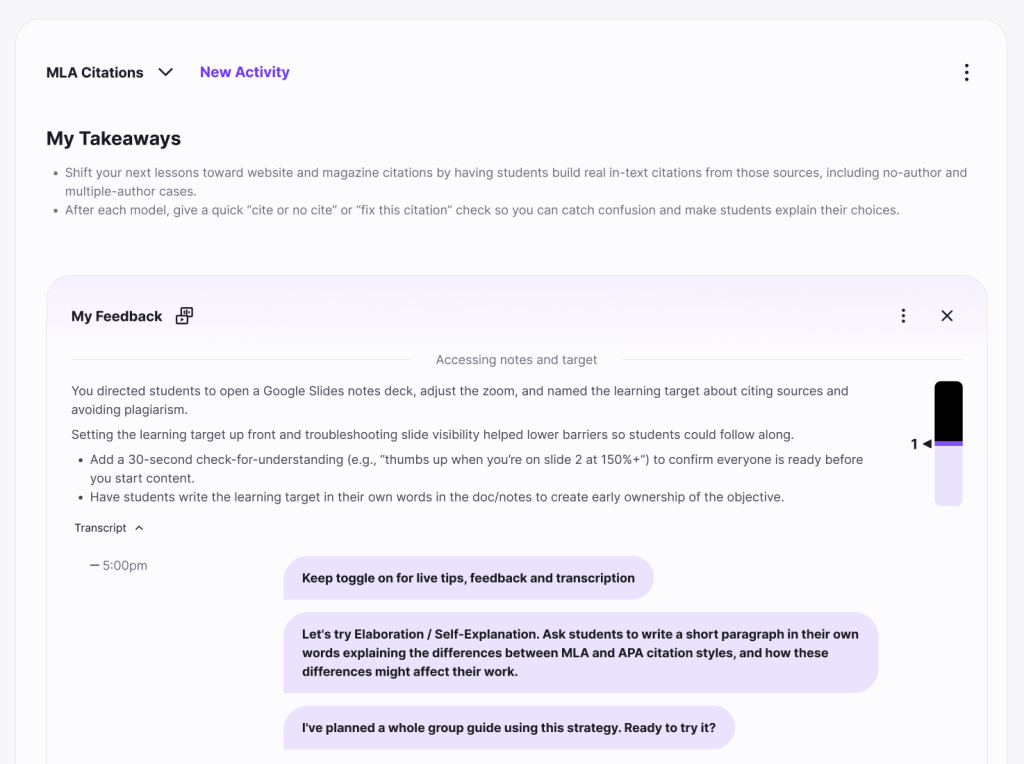

A typical M2 feedback session might surface something like:

*”Students responded well to your opening question, but whole-class participation dropped during the independent work phase. Consider building in a structured think-pair-share before returning to whole-group discussion.”**

That’s the kind of specific, actionable language student teachers can act on immediately. It also maps directly onto the competencies supervisors are already evaluating. M2 feedback aligns to standard teacher preparation frameworks like Danielson and Marzano, connecting AI insights to the rubrics programs are already using.

M2 doesn’t replace formal observations or human judgment. What it gives supervisors is a richer evidence base: a complete arc of real sessions, real classrooms, real decisions, not two curated clips. When coaching conversations are grounded in session-by-session data rather than one visit from three weeks ago, they become more specific, more productive, and more useful to the student teacher sitting across the table. Dr. Natalie Bolton’s experience shows what this looks like in practice.

The habit that carries forward

The question a pre-service program has to answer is clear: do our teachers leave with the skills needed to teach today, and the mindset to get better tomorrow?

Teaching rewards people who treat their practice as something worth examining. The teachers who grow most consistently over a career are those who stayed genuinely curious about their own work. That disposition is easiest to form at the very beginning, before other habits are established.

The structure of a field placement helps to shape it. When student teachers receive feedback weekly, they internalize a simple but durable lesson: looking closely at their practice is a normal part of the job. When formal observations are the only occasion for feedback, they internalize that evaluation is rare, high-stakes, and something to prepare for rather than learn from. Whichever lesson they carry out of a placement, they’re more likely to carry forward.

A semester of weekly M2 sessions is a semester spent building that habit. The argument for continuous feedback goes beyond this semester. It’s a foundation for everything that comes after.